Giovanni Stradanus, Illustration of Dante’s Inferno, Canto 20, 1587.

Cory Doctorow is an author, journalist, and activist. His novels for teens and young adults include Homeland, Pirate Cinema, and Little Brother. His novels for adults include The Rapture of the Nerds and Makers, and he has won numerous awards for his writing. His recent nonfiction book Information Doesn’t Want to Be Free addresses copyright, creativity, and success in the digital age. Doctorow is also coeditor of the blog boingboing.net and a special consultant to the Electronic Frontier Foundation, a nonprofit civil liberties group. He also cofounded the UK Open Rights Group. Doctorow spoke at SFMOMA in October 2016 about the value of access to culture, and the relationship between museums, libraries, and technology.

Thank you for coming. I want to draw a connection between the things that happen inside the walls of galleries, museums, libraries, and archives and what’s happening outside of these walls, in our region. The Information Age has been attended by two parallel and contradictory shifts in the way that we think about value. The first is that tech’s rise has come at the same time—and maybe for the same reason—as the rise of neoliberal globalism, which says that everything should be viewed through a market lens, that we should take things apart and put them back together again, as though they were businesses, to find out whether they’re running properly.

Every one of our public institutions is being subjected to this lens, and there have been some very distorting effects. The indiscriminate application of market logic makes a nonsense of activities that are fundamentally nonmarket activities, including archiving and scholarship and cultural preservation and communication—the things that are the business of a museum. To describe the business of museums in market logic is to apply a metaphor that is both highly suspect and highly susceptible to intellectually dishonest manipulation. So, I’d like to make an analogy here that will be familiar to anyone who’s ever worked for a start-up, or has friends who worked for start-ups.

I’d like you to think for moment of the digitization projects that have been undertaken through public/private partnerships. The Department of Defense (DOD), for example, hired a company called Wazee Media to digitize its archives. Now, in these projects, commercial operators are brought in to digitize public collections, and then they put the digital collections behind paywalls so that they can recoup the cost of digitization. The market logic story goes like this: When a company like Wazee is making a sizeable investment in the archiving of these public assets, it has to recoup its investment. It’s assuming the risks, so it gets the reward. But this is a highly selective way of expressing the way that capitalism works.

Let’s take another look at it. Here in Silicon Valley, and throughout the high-tech world, we have this grand tradition of start-ups who court investors with high-risk, high-reward propositions, from search engines to Bitcoin. And it’s virtually unheard of for start-ups to be profitable from the get-go. Start-ups may run for years before seeing their first dollar in income. and even years more before the income exceeds their costs and becomes profit.

So, entrepreneurs will seek out angel investors, individuals who put early money into the business in return for a generous ownership stake in that business. And almost every angel investment comes to nothing—it’s just money flushed down the toilet. But there’s no shortage of angel investors because the reward for a successful bet is incredible. Being the first investor in a business means that the business pays you a much larger dividend than it pays to any of the later-state investors—even the ones who put more money in it than you did. If you’ve assumed that early risk, you get the reward.

Now, back to cultural institutions. For decades, for centuries, the public have played the role of angel investors for many of these collections. Paying and paying, year after year, to keep them afloat while they seek out their path to profitability. And now these institutions have arrived at their moment in the sun. The world of digitization has unlocked the value latent in their collections. Through digitization, the whole world can now use these collections, simultaneously! Scholars everywhere can text-mine them; they can be used to start new businesses, to create new curriculum.

This is the thing that every entrepreneur dreams of: the moment when a weird and unlikely idea is validated by the marketplace, where it arrives at its cultural moment—the moment when the idea for a barebones search engine like Google suddenly rockets to ascendancy and leaves behind Yahoo and AltaVista. At that moment, it’s customary for the angels and the entrepreneurs to seek some deeper pockets, some venture capitalists, and sell them a very small slice of equity in exchange for a very large amount of money, to build out all the infrastructure that you need to handle all that demand. Importantly, though, the angels are not crowded out by this. If the big investor tried to dilute the angels out of existence, the management and the VCs would end up in court faced with a minority shareholder suit that they would lose.

Now, this is exactly what happens when you get a public/private partnership like Wazee and the DOD. We, the public, we’re the angels. We built up all that value in those public assets; the return on our investment is meant to be access to those assets, the right to come and see them, and use them. And the Johnny-come-lately digitization firms are the venture capitalists, the latecomers to the party who only put in their money once our money has paid to bring the enterprise to profit. The risk they assume—the cost of digitization—is infinitesimal compared to our own. And yet they demand terms that result in the confiscation of all of our equity, for accomplishing the relatively minor, low-risk task of taking pictures of our stuff. And management—the government of the neoliberal era—gives it to them. Even in the dubious enterprise of applying market logic to public enterprises, this is a Titanic rip-off, and no actual business would get away with it in the real world.

But of course, this is a nonsense from start to finish. The public doesn’t invest in cultural preservation because we see a profitable upside coming down the road. Our cultural institutions tell us who we are, where we’ve been, where we are, and where we’re going. We invest in cultural preservation, archiving, and access because these are public goods. They’re not market activities primarily. Using return on investment to calculate the value of the museum sector is like adding up all the money you spent on raising your children and then handing them a bill for their upbringing when they graduate from high school. It’s what a sociopath does.

The second thing that Silicon Valley has grown up with in the last couple of decades is mass surveillance. I think it’s worth asking, why do we spy on ourselves? Why domestic surveillance? Why bother spying on your own population? I think the short answer is that it’s in the service of social stability. You think that there are people who live in your borders, who don’t think your country is legitimate, and who would like to see it overturned by any means necessary. That’s why, historically, domestic surveillance is concentrated on things like Red Scares and spying on peace activists and so on.

Now, social stability is inversely correlated with wealth disparity. The French scholar Thomas Piketty wrote an amazing book called Capital in the Twenty-First Century, in which he tracks three hundred years of capital flows around the world—how much money was owned by whom, and when—and he uses these painstakingly assembled data sets to do it. It’s an incredible exercise in what you can do with big data. Over and over again, when he talks about wealth disparity, he returns to wealth disparity levels in 1789 in France. If you’re French, you go, “Oh yeah, he’s talking about the moment in which the state became so illegitimate that they gathered up all the rich people and cut their heads off.” If you’re not French, you’re probably like, “What’s the deal with 1789 in France?” But his point is, there’s a moment at which the amount of money that you have to spend to keep people from chopping your head off exceeds the amount of money you would have to spend to make them not want to chop your head off— to build schools, to build hospitals, rather than to pay for cops and spies.

There’s a corollary to that that I don’t think he touches on, because he doesn’t really think much about surveillance, but when you make spying cheaper, the moment at which it’s rational to pay for schools and hospitals grows more distant. So, what’s happened to spying in the last couple of decades? Well, the peak year for surveillance in the GDR, the former East Germany—the most surveilled state in the world at the time—was in 1989. The security force was called the Stasi, and there was one Stasi informant for every 60 people.

About twenty-five years later, in the United States in 2016, the National Security Agency hires about one spook for every ten thousand people that they spy on. In 1989 it took an army to surveil a nation, and in 2016 it just takes a battalion to surveil a planet. Technology has made surveillance a lot more efficient, thanks to things going on in Silicon Valley.

In fact, Silicon Valley has given us the tools to pay for the bills for our own surveillance. We buy the phones; we pay the ISP bills; we pay for the service that mines our data, gathers it, and stores it in places where governments can go and raid it, in a kind of public/private partnership from hell. This is the twenty-first century version of the Chinese government during the Cultural Revolution sending you the bill for the bullet that was used to execute your family, except here, we just pay the bill continuously for the surveillance that’s used to watch us all the time.

You may have seen that all of the Yahoo accounts were stolen sometime in the last five years, probably by a foreign government. And the bad news is that all the data that we’ve collected is someday going to leak. The good news is that we are fast approaching the moment of peak indifference—the moment at which the number of people whose lives have been destroyed by those leaks will never go down again, and so the number of people who are upset by the data collection that gave us those leaks is only ever going to go up. The Panama Papers produced a huge cohort of very rich, powerful people who suddenly care an awful lot about electronic privacy. But people like you and me have a stake in this, too, because last year a couple of million people had their data raided from the IRS.

And I came to the Bay Area about four or five months ago, for this multistakeholder Rand Corporation war game, where we were supposed to figure out what we would do in the event that the US government’s information infrastructure melted down. There were civil libertarians and lawyers and technologists and a ton of spooks, a ton of people from three-letter agencies, cops and spies and government officials, and it was the kind of thing where they come in and it’s like a locked-room escape mystery, except you’re trying to figure out strategies for saving the country when its information infrastructure melts down.

Periodically, they come in and say, “There are people rioting in the streets, and the president wants to know what your recommendation is right now,” to kind of put the pressure on. And every time someone suggested something that would involve spying on everyone all the time, all the spooks and cops in the room said, “No, no, we just can’t do that.” And I couldn’t figure out what was going on until someone said the three magic initials: O-P-M, Office of Personnel Management.

If you want to get security clearance in America, you have to go to the Office of Personnel Management and tell them everything that could be used to blackmail you: The fact that your brother is a junkie. The fact that your mom attempted suicide when you were in high school. The fact that you’re in the closet. All of the things that could ever be used to compromise you. The OPM gathers all that data, and then last year, all twenty-two million of its records were stolen, presumably by the People’s Liberation Army, the Chinese government. (That included all those people’s fingerprints, which if you ever needed an illustration of why fingerprints make bad alternatives to passwords, try revoking twenty-two million Americans’ fingerprints and giving them new ones.) So that created twenty-two million people in the halls of power who seriously care about the destiny of their personal data.

When we talk about privacy and we talk about surveillance tech, we’re not arguing about the data that’s already been collected—that data is definitely going to leak. Any data you collect will probably leak. Any data you retain is definitely going to leak. We’re arguing about the data that is going to be collected in the years to come. It’s like arguing about carbon, right? We’re not arguing about whether the carbon in the atmosphere is going to do terrible things to us—that carbon is in the atmosphere, and there’s very little we can do to sequester it now that it’s out there. Very hard to get food coloring out of the swimming pool. What we argue about when we argue about carbon, is all the carbon that we’re thinking about putting into the atmosphere. So, when we talk about privacy, we’re talking about decarbonizing the future of the surveillance economy.

We now live in a world made of computers, and that’s why this problem has become especially urgent. Summer 2015: you may remember a couple of fun-loving security researchers realized that 1.4 million Chrysler Jeeps were vulnerable to being controlled over the Internet. A car is a computer. You put your body into, right? And it hurtles down the road at ninety miles an hour—five miles an hour on the 101—and you pray that that computer is taking instructions from you and not from some rando over the Internet.

Every three years, the US Copyright Office asks security researchers how the Digital Millennium Copyright Act (DMCA), or Section 1201 of the DMCA, is working out. And last summer, summer 2015, researchers had discovered blood-curdling vulnerabilities that their general counsel wouldn’t let them talk about—in medical implants, because an insulin pump is a computer that you put in your body; in tractors, because a farm is a computer that we put seeds into; in voting machines, which are computers that we put democracies into.

The idea that we put networking intelligence in everything we have and call it “the Internet of Things” means that we don’t know what it’s for, just like we call 3-D printers 3-D printers because we don’t know what they’re for. But the one thing that we do know is what the Internet of Things business model looks like, and the sad news is that there isn’t one. The Internet of Things business model is: “I’m making hardware, it has a 2 percent margin, that margin falls to 0 or negative if I’m successful, because it gets knocked off in the Pacific Rim and imported into the US, and so the only way I can raise investment capital to keep the lights on is by figuring out how to sell one or both of data or services. So, either I spy on my customers and someone buys that data from me or buys me to get that data, or I figure out a way to make sure that my customers can only install apps that come from my app store and only get parts that come from my authorized dealers and only get devices fixed at my authorized service centers and only buy consumables and media from my authorized channels, and that’s how I maximize my revenue.” So, to maintain that model, you need a way to stop customers from getting away from that model, because that’s not a thing any customer wakes up wanting.

And so you need to figure out how to stop users from reconfiguring their computers to act on their own behalf instead of the manufacturer’s behalf. And to do that, you need a technology—those of you who are in tech, you’ll know it as digital rights management, or DRM. This is the idea that you have a software computer that only allows the user to do a certain constrained set of actions, and if the user tries to add new software or to go beyond that constraint, the software says no. If you’ve ever bought a DVD while you were traveling overseas and had your DVD player refuse to play it, it’s not because your DVD player couldn’t decode that DVD, it’s because it wouldn’t decode that DVD. It’s perfectly capable of decoding, it just doesn’t want to, and you’re not allowed to tell it to even thought there’s no universe in which buying a DVD is piracy.

These digital rights management systems, they’re protected by this law, the Digital Millennium Copyright Act. Section 1201 states that bypassing those digital rights management systems is a felony punishable by five years in prison and a $500,000 fine for a first offense.

Hewlett-Packard—you’ve probably heard of them—makes printers, and in March 2016, HP pushed out a security update. So, one day you go to your printer and it’s got its little LCD and it says, “There is a security update, press OK to install.” And you press OK to install, and then it silently counts down until September, and then in September, it triggers a thing in all of those updated printers that causes them to no longer accept third-party cartridges—cartridges that might cost 10 percent of what HP’s cartridges cost.

“You know, I wish that I could only buy a printer that would only let me buy ink from the manufacturer at whatever price the manufacturer wanted to charge”—that’s not a thing that anyone ever said while shopping for a printer.

This stuff breaks really easily, and that’s not good news, it’s bad news, because once you’ve decided that you have to stop people from breaking it, you’re like the old woman who swallowed a fly. Now you have to take some measure to stop them from sharing information about how to break it, so the Digital Millennium Copyright Act also makes provision for punishing people who disclose information about how it works. And that’s why security researchers, who discovered defects in products that have digital rights management in them, face jail time, just like the people who figure out how to get around them.

So, if we’re going to decarbonize the surveillance economy, we’re going to have to design the devices that spy on us so that they can be reconfigured by us so that they are transparent to us so that their defects can be quickly rooted out, because the surveillance economy isn’t just dominated by people who deliberately make devices to spy on you, it’s also dominated by people who find mistakes that other people made and use your devices to spy on you. We don’t have an experimental methodology for proving that something is secure. Anyone can design a security system that works so well that they themselves can’t think of a way of breaking it, right? But all that means is you made a security system that works on people who are stupider than you.

And because the people on the side of good who discover vulnerabilities are threatened with legal liability if they disclose those vulnerabilities, the flaws can just fester until someone decides to cause havoc.

We need computers that are designed to be our honest servants, that are designed to be as transparent as possible, that are designed to allow us to nominate any proxy we want—to look through them and find the defects. We need computers whose policy framework is not that manufacturers get to decide the time and manner of disclosures that embarrass them and maybe cost them money, right? Companies are bad custodians of their own dirty laundry, and when you have an interest in their dirty laundry, then you have an interest in those companies not being the custodians of that dirty laundry.

So, what can a museum do about this? What can a library do? What can an archive do? Well, nothing, right? If libraries and archives are glass museums where we stick the past, then there’s nothing that they can do about this. But, if archives and museums are survival kits for rebuilding fallen civilizations, then they can do everything about it.

In most of the world, the library is the last place where you’re welcome on your own terms and not as a life support system for your wallet. In most of the world, the museum is the last place where artifacts are valued because of what they say about our culture, not because how much we can resell them for. In most of the world, a museum is the last place where art is acknowledged as a continuous collective practice, in which all novelty consists of combining the work of your forebears in novel ways.

So in this sector—in the galleries, libraries, archives, and museum sector—there is a lot of pressure to put digital locks on digital assets: to forbid photography, to hoard the 3-D shape files that describe the archives in the cases, to keep books on shelves instead of converting them to bits, to impose user fees and permissions between the public and your services, because your digitization has been undertaken by a private company. And this is a dead end. If galleries and libraries and archives and museums think that they’re struggling now, what will they be after fees, permissions, and locks have convinced the public that these organizations have nothing to say to them?

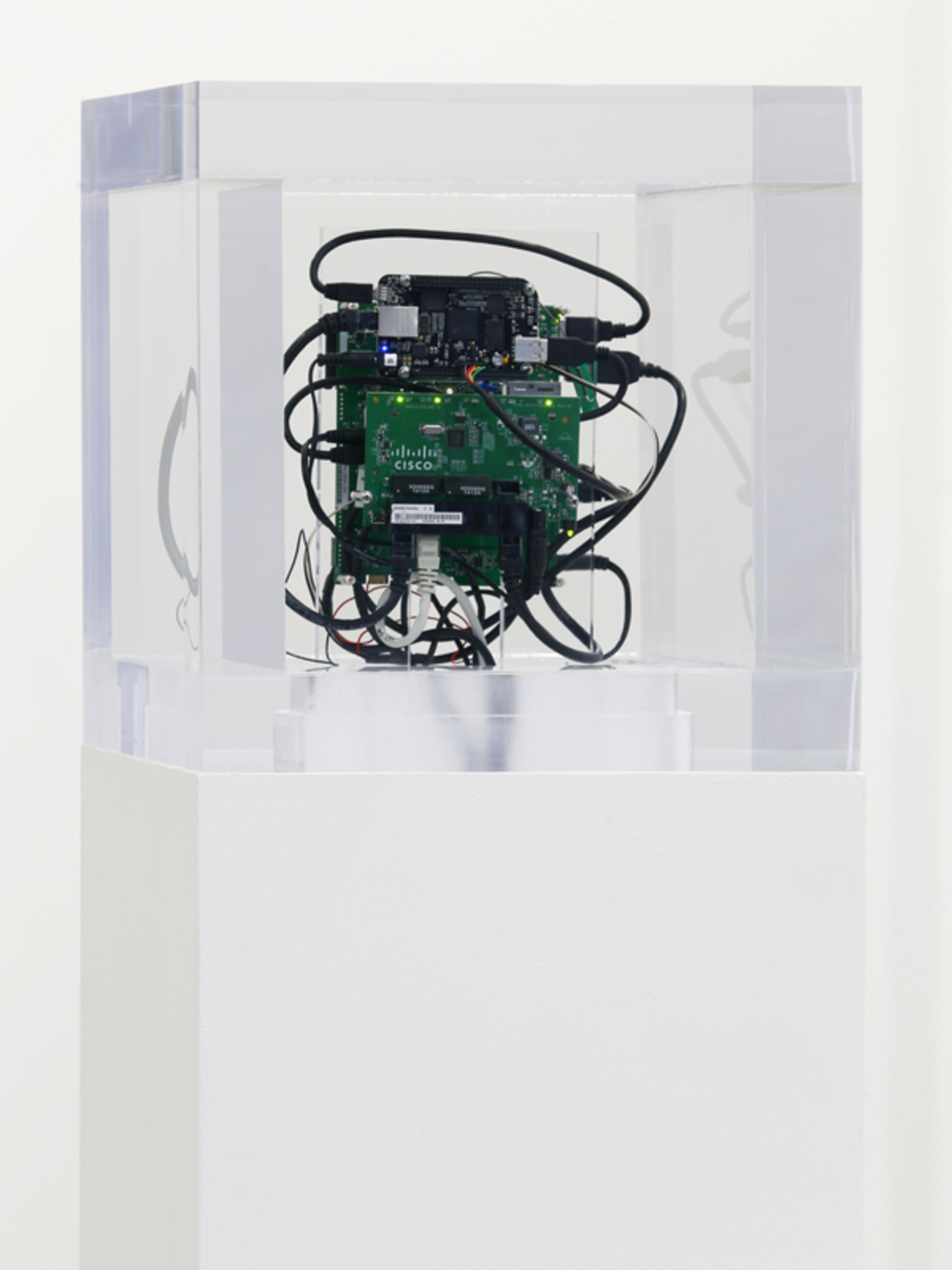

Trevor Paglen, Autonomy Cube, mixed media, 2014. Photo: SFMOMA.

The wealthy few who’ve starved our public coffers of tax dollars and funded the politicians who’ve declared the treasury too empty for our public institutions, they don’t need museums; they’ll buy your items up at fire-sale prices when you collapse and assetize your collection. After all, the only thing that’s nicer than having your name on the wing of a museum is taking all the things in that wing home and leaving them there. Think of the Medicis, not the Carnegies. So, museums can and must do better, and we’ve seen some of the first inklings of that. We have libraries that are running tor nodes, we have museums that are running tor nodes under glass cases on plinths as works of art, where all the Internet connectivity in those institutions is anonymized and put into this great, whirling mix master of traffic that helps people all of the world protect their identity and their privacy.

We have libraries and museums and public institutions that have turned themselves into maker spaces, and that teach people how to build their own computers out of the prodigious electronic waste that we generate every day, like a dojo teaching a samurai to make their own sword. If you build your own computer from the metal up, no one will ever tell you how to reconfigure it. We have open data hackathons where museums release their data. We teach curation to a generation overwhelmed by data.

This business of HP—this business of all the companies that say that they get to tell you to a fine grain of detail how you may or may not use their product after they sell it to you—has this notional, metaphorical, intellectual property interest in your real property. It’s a return to feudalism, where only a small elite gets to own property and the rest of us are tenants of that property. Because where copyright lasts for ninety years and where software infiltrates everything that we use and where all software is copyrighted and where copyright entitles companies to tell you how you can use things to a fine grain of detail, nothing can truly be said to belong to you. In fact, nothing can truly be said to belong to any real people. Property becomes the purview of corporations, and these artificial, immortal life forms that we’ve created treat us like their inconvenient gut flora.

The ancients were not like us in some important ways: as far as we can tell, the ancients didn’t have futurity. They didn’t think about their posterity when they buried King Tut with all of his things—it wasn’t so that we could dig him up in thousands of years and marvel at how the world had changed. Through most of antiquity, they lived in a fairly static environment where things just didn’t change all that much. They preserved those things for the afterlife, not for their posterity. But we have posterity, we do think about the future, and we do plan for the future. And in two thousand years our descendants are going to be in their museums arranging our artifacts in cases—artifacts from this dawn of digital history—and they’ll wonder about the curators and the historians and the archivists who were their progenitors, the professionals who, more than anyone else, had it in their power to understand what it meant, what potential this technology had.

We have people today who say that because we digitized so much information, libraries and librarians are irrelevant. Because the job of a librarian is to be a kind of messy, squishy, retrieval apparatus for books. But librarians, their job is to help you figure out which of the books you can trust, and why—how to navigate those giant information spaces. The idea that we don’t need librarians because we have the Internet now is like the idea that we don’t need doctors because we have the plague now. So, people working in institutions like this, you do get to choose how history will remember you: whether you’ll be remembered as someone who served a future in which those informational roads were used to conquer and control us, or to give us the freedom to communicate and collaborate to our enduring universal benefit.

For more information about Doctorow’s work with the Electronic Frontier Foundation on digital rights management, visit https://www.eff.org/press/releases/cory-doctorow-rejoins-eff-eradicate-drm-everywhere.